Co Cerebral · EduOSAn RCA thesis on what AI in education feels like when each cognitive mode has a voice and a body.

Co Cerebral is my RCA Design Futures thesis. It pairs Edward de Bono's Six Thinking Hats methodology with multi-agent LLM architecture — White, Red, Black, Yellow, Green, and Blue, each as a distinct agent. The thinking partner you talk to instead of read.

The Challenge

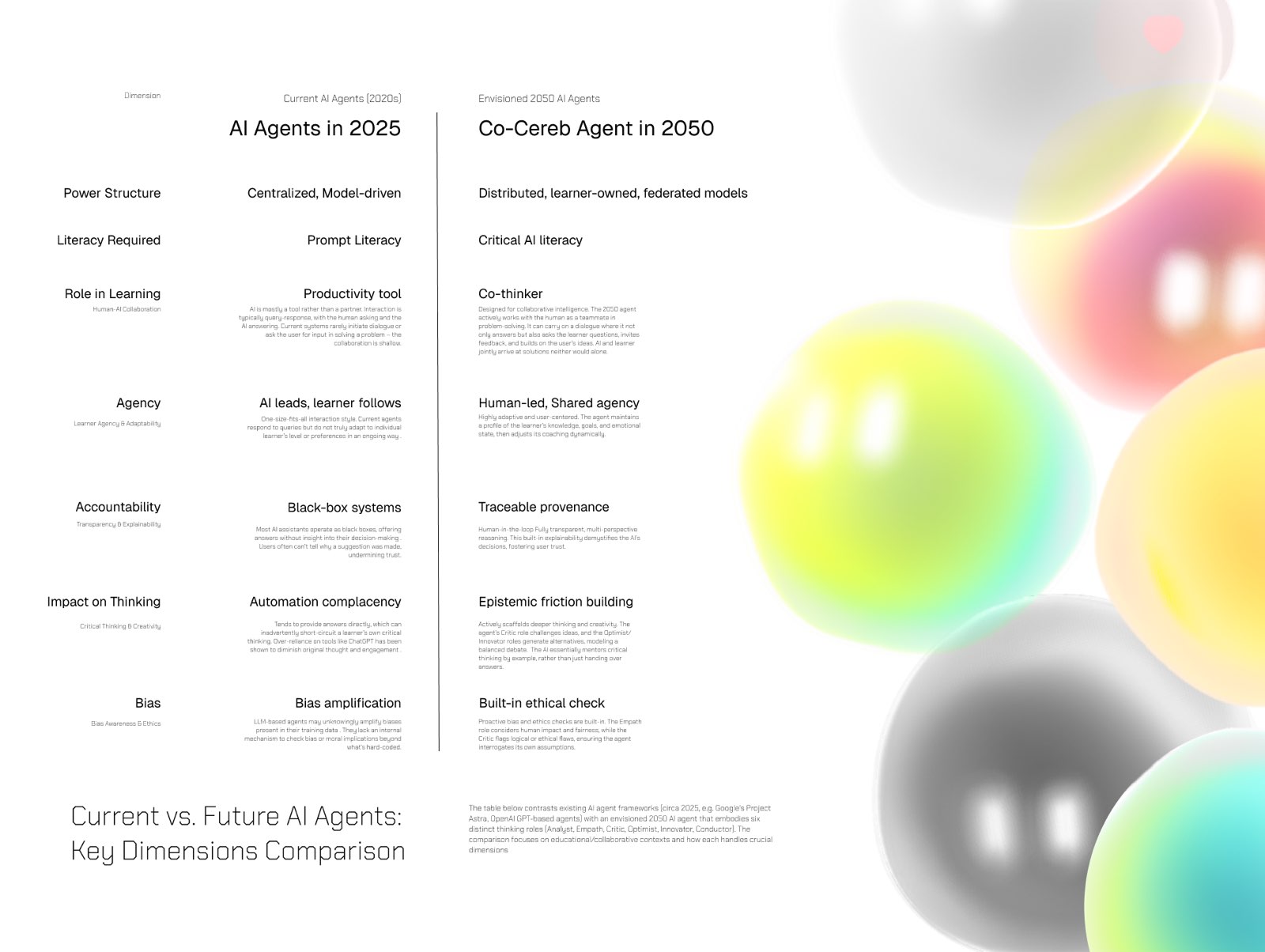

Most AI-in-education products today are static chat interfaces — text in, text out, no embodiment. As my RCA thesis on AI in Education, Co Cerebral asks a different question: what if each cognitive mode in a structured thinking framework had its own embodied agent — voice, visual presence, distinct personality — and you dialogued with the framework instead of reading about it?

The thesis is also a product hypothesis. The framing came from Edward de Bono's Six Thinking Hats — White (facts), Red (emotion), Black (critical), Yellow (optimistic), Green (creative), Blue (process) — where structured cognitive disagreement produces better thinking than open-ended chat.

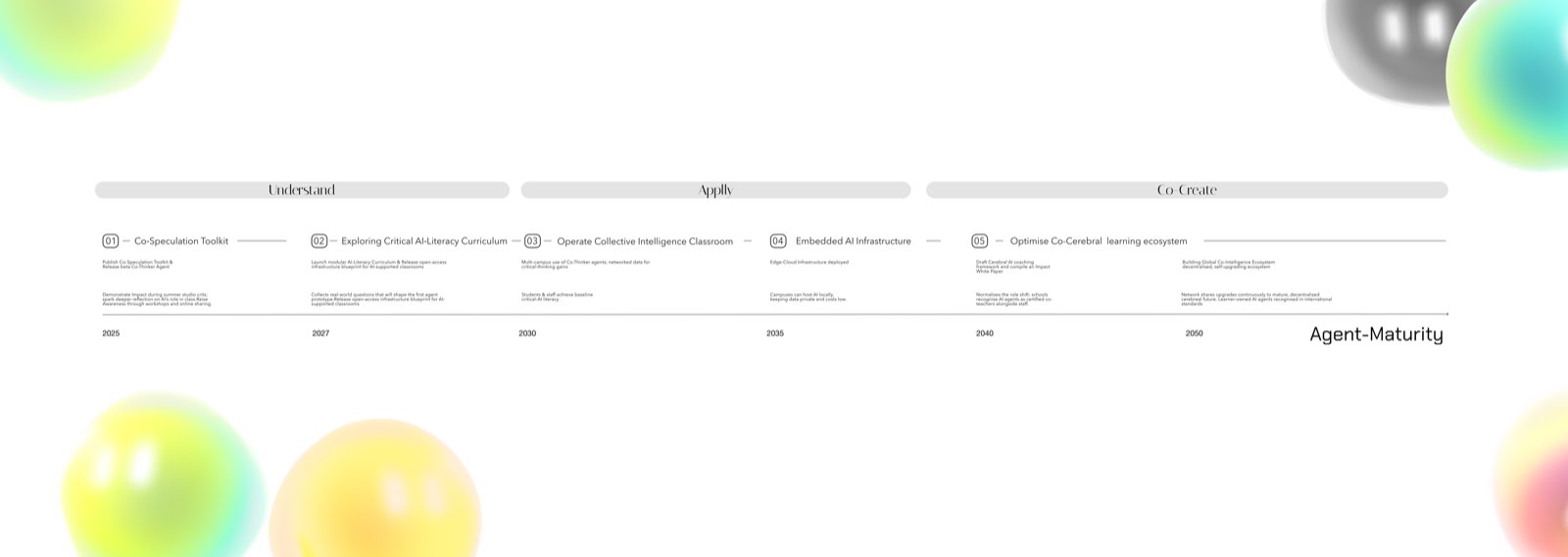

Research & Discovery

The research flow embedded above maps how a learner moves through the system: from a single question, through the rotation of perspectives, into synthesis — voice-mediated throughout.

It is a learning interface designed around cognitive disagreement, not consensus. The hat structure is a constraint, and the constraint is the feature.

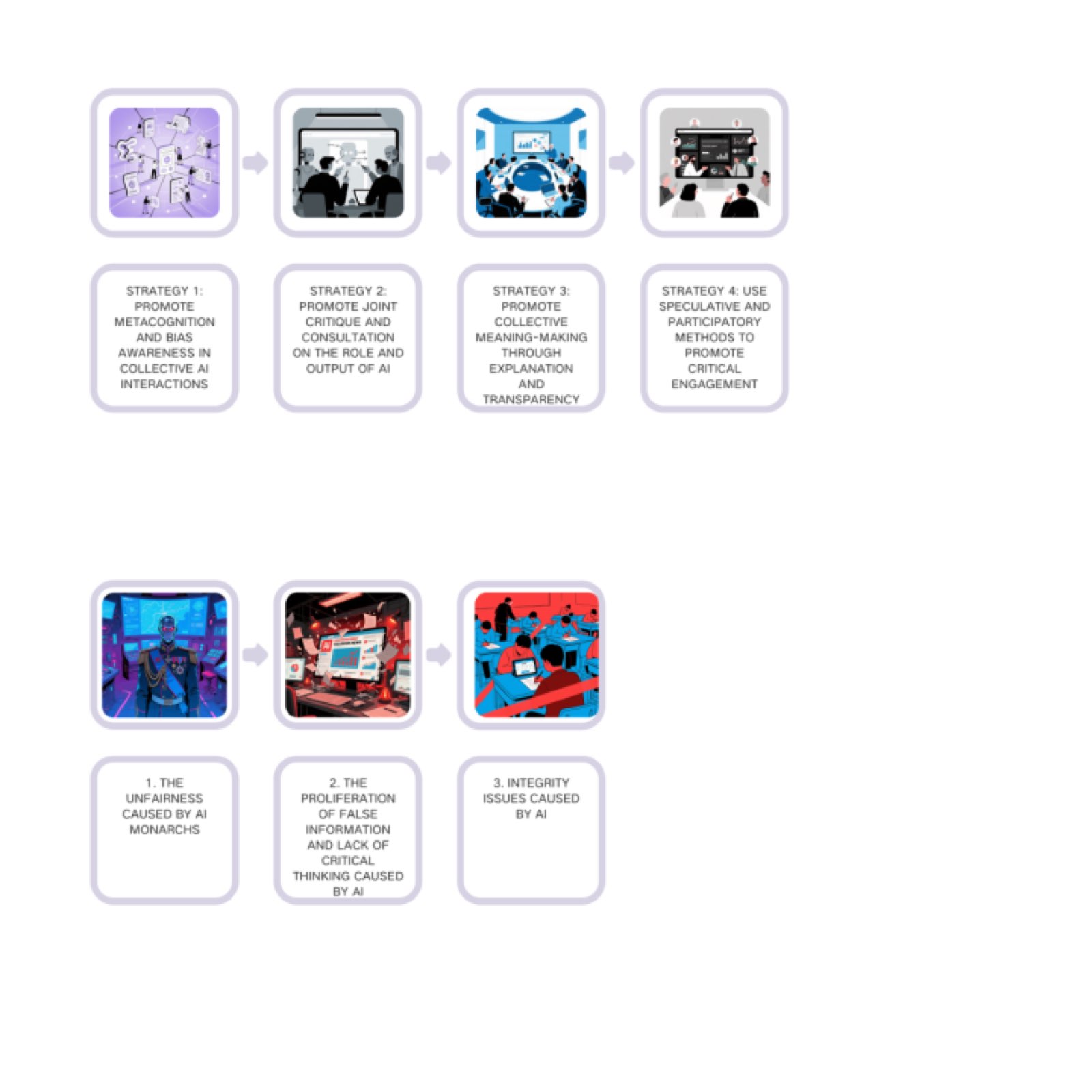

Design Strategy

Three design commitments anchor the build:

- Voice-first, not chat-first. Web Speech API for input and synthesis — the agent speaks back, you don't read.

- Embodied, not disembodied. Each hat has a Spline 3D presence; agents are inhabited, not summoned.

- Structured, not free-form. Six Hats is a constraint that produces better thinking than open-ended chat — the structure IS the feature, not a limitation.

Implementation & Pipeline

Tech stack:

- Frontend: Next.js 15 with TypeScript and Tailwind

- 3D: @splinetool/react-spline (the same library powering the embeds on this page)

- Spline Variables for material control across hat states

- Voice in: Web Speech API (free, browser-native)

- LLM: Vercel AI SDK v5 via AI Gateway

- Voice out: Web Speech Synthesis

- Deploy: Vercel Hobby

- Total ongoing cost: £0

This is intentional. A thesis that costs money to run is a thesis that doesn't survive past the deadline.

Results & Impact

The live demo above is the working prototype. Drag the scene to inspect each hat's embodiment; speak to summon an agent. As of this writing the system is functional end-to-end on a £0 stack — and I am still iterating.

Met a potential cofounder at Vercel Builder's Night who is interested in the education angle. Conversations are early; the project is no longer just a thesis demo, it is a possible product.

Lessons Learned

Two things stand out so far:

- Constraints over features. Adding voice was hard but it changed the entire interaction quality. The hardest design decision wasn't a feature; it was choosing modality.

- Spec stability. The Apple Note brief that started this rebuild has barely changed in two months — locking the tech stack early let me ship instead of debate.

What's Next

Immediate: publish the Spline scenes to public URLs and open the demo to cohort testing.

Then: decide K-12, corporate training, or adult learner as the wedge audience — currently undecided.

Eventually: if the cofounder conversation goes anywhere, productise the methodology framework, not the Six Hats specifically.